Best practices to optimize ingestion and costs

Microsoft Sentinel is a cloud-native SIEM (Security Information and Event Management) and SOAR (Security Orchestration and Automated Response) solution. It collects security-related data from different sources like firewalls, servers, PaaS, etc. to help organizations detect and respond to security threats within their IT environment. For a more detailed overview of the capabilities that Microsoft Sentinel offers, review this Microsoft Docs article.

Depending on the size of the environment and the services deployed, log ingestion, as well as storage, can represent a big portion of the cost of Microsoft Sentinel. Microsoft charges to ingest data into Sentinel on a per GB basis. As described here, several ways exist to reduce costs by ensuring that Sentinel only processes relevant logs.

This article explores how to increase cost efficiency within Microsoft Sentinel by leveraging Log Analytics capabilities.

Azure Monitoring Agent (AMA)

The AMA collects monitoring data from virtual machines, independent from the VM host: Azure, on-premises, or multi-cloud environments. This agent has some advantages:

- Multi-homing support: you can send data from Windows and Linux VMs to multiple Log Analytics workspaces, for example, to monitor non-security relevant data for a VM, like performance metrics to understand hardware utilization, in a dedicated workspace

- Scope of monitoring: centrally configure log collection for different sets of VMs, including different sets of data. This allows for centrally managed ‘log profiles’ to ensure valid configuration throughout the infrastructure. (See Data Collection Rules below.)

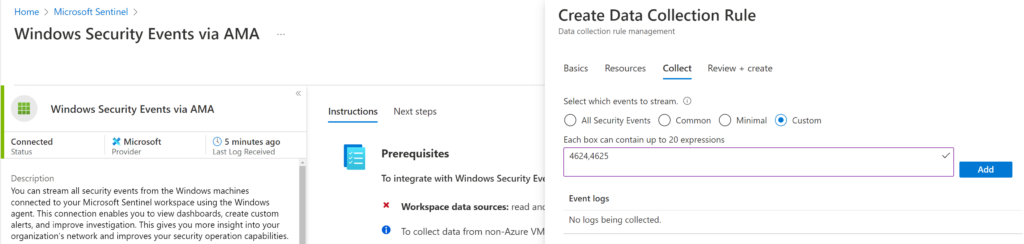

- Windows event filtering: you can use XPATH queries to filter specific Windows events, like 4624,4625 (see configuration window in Figure 1). This allows for further reduction in ingested volume, as it’s possible to select only the logs required for security monitoring.

With AMA, you can filter VM security logs. AMA supports several data sources and events for use with Microsoft Sentinel. The sources in preview at the time of writing might be generally available when you read this text:

- Windows DNS logs: Private preview (sign-up link)

- Linux Syslog CEEF (Common Event Format): Private preview (sign-up link)

- Windows Forwarding Event (WEF): Public preview

- Windows Security Events

Note: AMA will replace the Microsoft Monitoring Agent (MMA), due for deprecation on August 31, 2024.

Check out the Top 10 Security Events to Monitor in Azure Active Directory and Office 365 in this eBook.

Data Collection Rules (DCR)

Filtering incoming logs is essential to avoid noise and optimize your ingestion costs. For example, firewall vendor Palo Alto Networks offers a storage calculator to determine how much storage per device will be needed. If we select the PA-5060 model with a quantity of 5 and high utilization, the average log rate is 4,370 events per second which results in 26 TB of logs within 30 days. This figure includes all kinds of log data, such as bandwidth, latency, and performance, that are not relevant to security. With DCR, a built-in feature in Log Analytics without additional license requirements, you can process data prior to ingesting it to Microsoft Sentinel. For instance, you can select security events and tables from your preferred firewall vendor and ingest just those events. You can add enrichments (like adding geo-location information for IP addresses), extract fields, and parse complex logs to align with a custom schema or use built-in schemas based on the Advanced Security Information Model (ASIM). Furthermore, you can obfuscate sensitive data for privacy and compliance, or filter out unneeded fields or entire events.

Table 1 gives an overview of the new log tier capabilities and how costs are determined.

| Log Tiers | Analytic Logs | Basic Logs | Archive Logs |

| Features | Full KQLAlerts supportedNo query limits90 days retention included | Reduced KQLAlerts not supportedQuery concurrency limits8 days retention included (not increasable) | Batch queries with limited KQL0 to 7-year max. archive |

| Ingestion charge (pay-as-you-go) | Log Analytics: $2.30/GBSentinel: $2.00/GB | Log Analytics: $0.50/GBSentinel: $0.50/GB | Data Archive Charge: $0.02/GB/month |

| Search query charge | N/A | Log Analytics: $0.005/GB -scanned | Log Analytics: $0.005/GB -scanned |

| Restore charge | N/A | N/A | Log Analytics: $0.10/GB/day |

Basic Logs

This is a new log plan, currently in public preview, with a reduced cost for ingestion designed for log data from sources that create a lot of noise, like firewall and proxy logs, NetFlow logs, and Virtual Private Cloud (VPC) flow logs that aren’t needed for detection. Basic Logs is best for logs traditionally regarded as having low detection value, but invaluable for security investigations when ad-hoc queries and search are critical. The pricing for Basic Logs is based at $1.0/GB and is currently only available when Microsoft Sentinel is activated on the respective Log Analytics workspace.

The lower pricing has some caveats as well. For example, they can’t be used for Analytic Rules or generating alerts. In addition, the KQL language parameters are limited, but still sufficient to search for suspicious activity. Basic Logs are stored for eight days, and this retention can’t be increased. The configuration can take place via Azure Portal (see Figure 2 demo above), REST API call, Azure CLI, and Microsoft Sentinel workbook (which makes the API calls in the background).

Note: When you change an existing table’s plan to Basic Logs, Azure archives data that is more than eight days old but still within the table’s original retention period.

More information about the Basic Logs feature is available in this Microsoft docs article.

Archive Logs

This new plan allows you to store security logs at a lower cost for long-term storage. As you might be aware, the default Microsoft Sentinel Log Analytics workspace can retain data for up to two years. If you need longer data retention, you need to export your data to Azure Data Explorer (ADX) or Storage accounts. With the Archive logs tier, you can archive data for up to seven years without the need for complex configurations/exports. The pricing for Archive Logs is based at $0.02/GB/month and the logs are accessible via the Search UI and/or Search job in the Azure portal. Like Basic Logs, Archive Logs are currently only available when Microsoft Sentinel is activated on the respective Log Analytics workspace. Use cases for Archive Logs are:

- Meet compliance requirements

- Archive data up to seven years for forensic reasons

Data tables enabled for archival automatically roll over into the Archive Logs tier after they exceed the configured retention period in the Microsoft Sentinel workspace. Similar to Basic Logs, the configuration can take place via REST API call, Azure CLI, and Microsoft Sentinel workbook. More information about the Archive Logs feature can be found in the Microsoft Tech Community article.

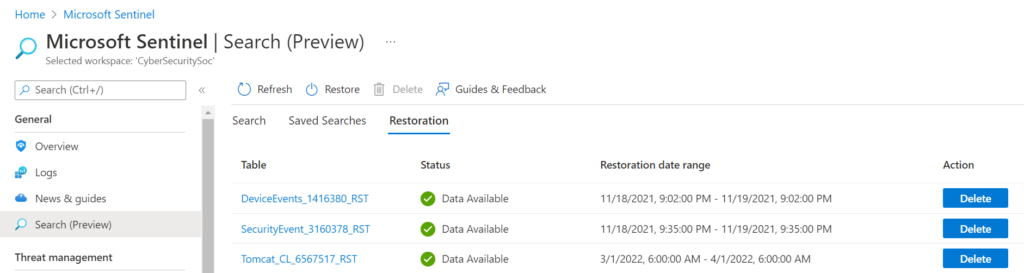

Search Jobs

Querying across all available data, no matter the log tier (Analytics, Basic, Archive) can be achieved with Search Jobs. For example, if you are investigating a specific entity, like a malicious IP address, and you need to query all tiers of logs. Search job queries are slower than standard queries, due to the nature of Basic and Archive log tiers. Additionally, you can then restore the data from both the Basic and Archive tiers to the Analytics tier to run your queries with full performance or to have advanced hunting capabilities. (See Figure 3 below.)

Note: Data restoration is not free and is charged based on the amount of data to be restored per day.

Summary

With the new Azure Monitoring Agent, Data Collection Roles, and the three different Log Analytic workspace log tiers, there are new capabilities allowing you to conduct more granular log ingestion, but also save money. As there is no need to set the entire Analytics tier to Basic or Archive, you can choose specific tables and be compliant with your organizational needs.

Check out the Top 10 Security Events to Monitor in Azure Active Directory and Office 365 in this eBook.