I recently dissected a problem in an Exchange Server organization that I have decided to share here, as much for the technical root cause as for the troubleshooting steps that were involved.

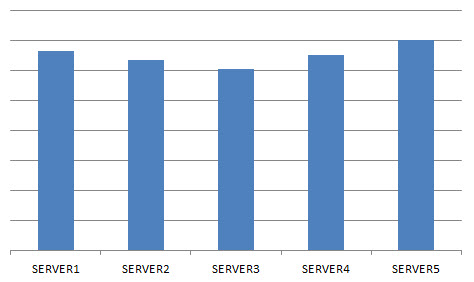

The problem first appeared as an imbalance in the volume of email traffic that each Hub Transport server in one particular site were handling.

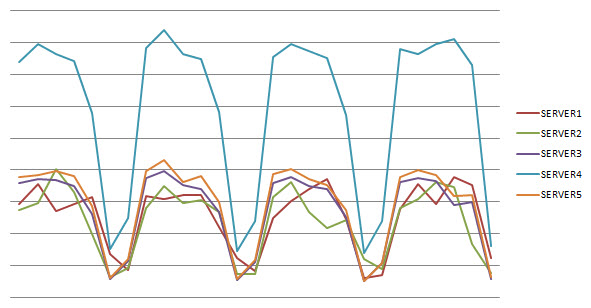

This was picked up in some routine performance monitoring. The daily email traffic for each of the five Hub Transport servers in this site for the last 30 days was calculated using message tracking log analysis, and the data used to generate this graph.

The heavy days are week days (Monday to Friday) and the dips are the weekends.

That trend is not surprising, but what did catch my eye was the way SERVER4 is handling twice the traffic as any other server in that site. Each of the other four servers is handling roughly the same amount as each other, but SERVER4 stands out above them all.

How Exchange Hub Transport Servers Load Balance Traffic

This particular site is not internet-facing. In other words it is not responsible for email traffic going in and out of the Exchange organization.

Therefore, the Hub Transport servers in this site are primarily handling messages between mailboxes within that site, or messages to/from other sites (whether for mailboxes in the other sites, or to/from the internet via one of the internet-facing sites).

Hub Transport servers in this scenario do not need any special load balancing configuration applied. Within an Active Directory site, and for email traffic between sites, Exchange performs it’s own form of automatic load balancing.

In effect, the Exchange server will look at the list of available Hub Transport servers for the route an email message needs to take, randomize that list of servers, and then try each of them starting with the first in the list until it finds one that is able to accept the message. Unless there is a fault of some kind it will usually send to the first Hub Transport server in the randomized list.

So while it doesn’t perfectly load balance the traffic, it should do so within a pretty small degree of variation.

Checking for Hub Transport Load Spikes

One of my first thoughts was that perhaps SERVER4 is being hit with a spike of traffic at some period of the day that is causing it to record more email traffic each day. It is entirely possible that some rogue device or application has been hard-coded to directly address SERVER4 for it’s SMTP needs.

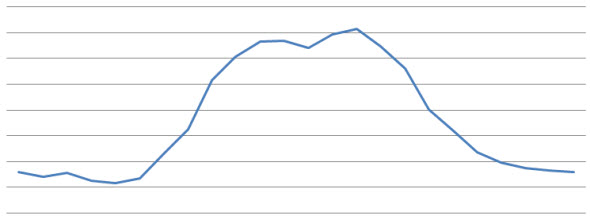

I once again used message tracking log analysis of the past 30 days to generate a graph of the average email traffic across each hour of the day.

The graph turned out to be unremarkable, no load spikes visible.

Checking for Top Senders

Since there is no apparent load spike, but the possibility remains that a particular host or application is sending a high volume of email throughout the day, the next angle of attack was to check the top senders (ie remote IP addresses) for email traffic through this server.

The results again were fairly unremarkable. But I also took a few extra moments to run the same message tracking log analysis on the other four Hub Transport servers in the site. This is where things took an interesting turn.

Where SERVER3-5 each showed an expected result for the top senders, SERVER1-2 showed interesting results. Those two servers had plenty of remote IP addresses logging hits on them, but a complete lack of any other Exchange servers in that list.

In other words, it began to appear that SERVER1 and SERVER2 were not handling any Exchange -> Exchange email traffic.

So what was all the email traffic they were logging?

SMTP Relay Traffic

Within this site we make available a DNS alias for applications and devices that need to use an SMTP service to send alerts or reports via email. This DNS alias is load balanced across both SERVER1 and SERVER2.

Now, considering that SERVER1 and SERVER2 should be processing their fair share of normal Exchange traffic, as well as the additional load of SMTP relay from applications and devices, you would expect their daily traffic graphs to be higher than the others in the site.

Instead, this is what a single day’s email traffic amounts to on each Hub Transport server.

To confirm the suspicion that SERVER1 and SERVER2 were not processing any Exchange -> Exchange traffic at all I analysed the message tracking logs for hits from a sample of Hub Transport servers in other sites. When this data was collated the graph looked like this.

Suspicion confirmed.

SERVER1 and SERVER2 are processing no intra-org email traffic coming in from other sites, and SERVER4 is having to pick up the slack. Although I was curious why the traffic still hadn’t evenly load balanced across the other three Hub Transport servers that was not the primary concern.

The real concern is why is this happening, and how do we fix it?

What is Causing the Intra-Org Email Traffic Imbalance?

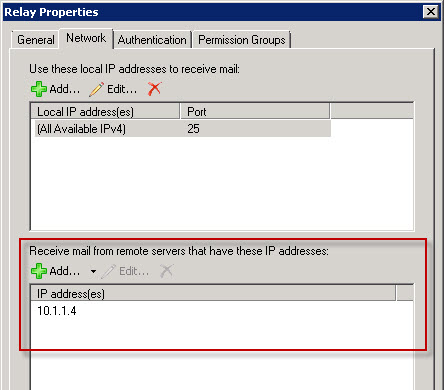

The root cause comes back to the SMTP relay configuration that is in place. SERVER1 and SERVER2 each have an additional Receive Connector configured for SMTP relay.

At the time these were implemented the servers had no additional network interfaces available in them, so the new Receive Connectors are bound to the same interface as the Default Receive Connector.

While this is something you can get away with, it is generally recommended that you dedicate an interface to a relay connector like this for reasons that I’m about to demonstrate.

When two Receive Connectors share the same interface and IP address they use the list of remote IP addresses configured on the connectors to determine which one should handle a particular connection.

Generally speaking the most specific match will determine which connector accepts a connection.

The Default Receive Connector specifies a remote IP range that could be described as “everything”.

When sharing an IP address between the default connector and receive connector it is easy for the server to determine that a connection from a specific IP address that is explicitly listed in the remote IP list of the relay connector should be handled by the relay connector.

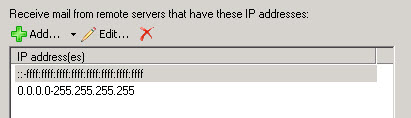

The trouble begins when administrators take a short cut and add entire subnets to the remote IP address list on the relay connector. If that subnet also contains other Exchange servers, connections from those Exchange servers will be processed by the relay connector, not by the default connector, because the subnet is considered more specific than the “everything” range that is on the default connector.

A receive connector configured for SMTP relay usage by non-Exchange systems does not have a configuration that Exchange likes when it comes to Exchange -> Exchange communications. So, the connection fails and the Exchange server will attempt to use a different Transport server.

In our case the connections to SERVER1 or SERVER2 were continually failing and SERVER4 was handling the extra load.

How to Fix the Transport Load Imbalance

With the root cause clearly identified the possible solutions were clear. We could either:

- Configure dedicated IP addresses for the relay connectors so that there is no confusion as to which receive connector the server should use to handle Exchange traffic vs application relay traffic.

- Remove all of the subnet entries from the remote IP address lists and replace them with only the specific IP addresses that should be permitted to relay.

Because the situation with available network interfaces had not changed we went with option 2, with a note to use dedicated interfaces for relay connectors in future designs.

The outcome is a more balanced load among the Hub Transport servers in the site, now completely in line with expectations and providing better performance and resiliency.

Just in case others have the same issue, we were experiencing this behavior, for some boxes used purely for SMTP relay – the issue turned out to be that when there is no mailbox present in the org for the user who has authenticated. The SMTP session gets proxied to the mailbox server hosting the database containing SystemMailbox{bb558c35-97f1-4cb9-8ff7-d53741dc928c} – therefore in our scenario one server was handling 90% of the traffic due to the SMTP service accounts being used not having a local mailbox.

Ben, thank you for this comment.

I introduced a new mail server, mailflow works but I was breaking my head on why the mails I relayed for testing, kept always getting proxied to one old mailserver despite there not being any relay configured for it, or such. Documentation on mail routing did not make me much wiser. Until i saw your post and moved the system mailboxes to the new server and now it moves via the new server.

At least now I understand

One more query, we are facing one error where Mapi session increase, due to that outlook disconnect automatically.

Error – 9646

Mapi session “fdff865f-a9f3-4ca3-a7ac-7df66f705517: /o=IFF/ou=Exchange Administrative Group (FYDIBOHF23SPDLT)/cn=Recipients/cn=mxk4094tcs695” exceeded the maximum of 500 objects of type “objtFolderView”.

The Microsoft Exchange Information Store service terminated unexpectedly. It has done this 1 time(s). The following corrective action will be taken in 5000 milliseconds: Restart the service.

That really has nothing to do with this article. If you do some Google searches for that error there are a lot of examples of how to fix it.

So , finally we got it.

Could u share your email with me for my other concern, f possible

Regards,

Akhil Chopra

yes you are right it will cost to the company, But i know there is a command where we can separate hub server from other hub servers to take mail traffic.

Any ways thanks i will find out.

Regards,

Akhil Chopra

I guess you mean this?

http://www.blankmanblog.com/general-news/tips-tricks/restricting-hub-transport-server-selection-using-submissionserveroverridelist/

But, if I Please hub server in separate AD site then for application server there will be very long route for relay the mail

No, an AD Site boundary is defined by IP subnets. The AD Site can be physically located wherever you want it to be, you simply need to define it separately in AD Sites & Services, because Exchange uses AD Sites as its routing topology.

If you absolutely have to have a Hub Transport server that is only used for application relay then I don’t see any other option right now. But it will cost money, because you’ll need to build at least one AD domain controller in that new AD Site as well.

Hi Paul,

is there any command, where we can set a hub server to take only application mails traffic not all traffic.

As we know by default hub server share load with other hub server as routing sharing

any idea, I know this is possible.

Regards,

Akhil chopra

I suppose you could achieve this by placing the Hub Transport server in a separate AD Site.

Hi Paul – great Article.

I believe that my Colleague Ian Shapton has added comments on another Transort Article.

It appears that we have something very similarstrange going on.

We have E2013 installed, and all Internet mail flows IN Via 2013 CAS and onto our 2013 Mailbox Servers (4).

At this point the 2013 Transport Services send the message to our recipients on E2007 via E2007 HT Servers.

The IP address of the 2013 Servers are listed on Server1 HT Custom Relay Receive Connector, but not listed on the other identical Server2 HT Curtom Relay Receive connector.

All Internet Mail is routed ONLY via the HT server that does NOT have the IP Address’ listed on our relay (port 25) connector.

We believe the because the 2013 server IP’s are listed on the Server 1, that Server 1 tries to answer with this Recieve connector, fails and so tries Server 2 where the mail is accepted by the “Default Receive Connector”.

We need to allow our 2013 Servers to relay mail too (for Symantec and Powershell etc) and think we might need to create an additional connector on both E2007 HT Servers.

What Port is used between 2013 adn 2007? is it 587 or 25?

Thank you.

Transport servers talk to each other on port 25. You are correct that having your Exchange 2013 server IPs on a relay connector on the 2007 HT will stop mail flowing from those 2013 servers to that 2007 server.

What are your actual needs for relaying via the 2007 HT servers? If the 2013 server is generating emails from scripts or other apps, and those are going to internal recipients, you don’t need any special relay connectors configured anywhere.

Thank you so very much for posting this. We were seeing this exact issue in our environment, and, at least for us, removing the default routes and narrowing them down to specific IP’s seems to have worked.

Paul, this is great.

Could you tell how you get first graph “Traffic Load Pattern for Hub Transport Servers”? You post scripts to calculate daily traffic for each server, but how I can combine them to one graph?

Best regards,

Oleg.

I used Excel to combine the data and create the chart.

Pingback: Troubleshooting Email Delivery with Exchange Server Protocol Logging

Have sort of the same problem. 3 HT in NLB.. I followed your guide and have dedicated ip addresses for receive connectors.. Still one of the HT servers is processing 60000 messages daily while the other too are processing about 20000.. Would that be a result of the nlb?

Hi:

I have an issue with the inter.-site load imbalancing. I have three transport servers connected to a exchange 2003 with a routing connector and trafffic is going only through only one transport server.

Do you any experience with that issue.

Thanks and kind regards

Good work Paul,

Well presented, keep up the good work.