Knowing What’s in a Site Document Library is Difficult

Often, I learn when the need arises to do something. For instance, SharePoint Online sites are fine repositories for documents and other information, but they can be hard to control in terms of what is in document libraries or know about everything stored in SharePoint site files. Few of us have the patience (or time) to review the contents of every folder in a document library to decide what should be retained and what is past its best by date. Tools like retention policies and labels help by removing items automatically after their retention period lapses, but these are broad-brush blunt mechanisms that deal with every item in the same way rather than on an item’s merits.

Which brings me to my opportunity for learning. The Office 365 for IT Pros team has been working on our eBook since 2014. Over nine editions and countless revisions, we have accumulated a large amount of content in the SharePoint Online site we use. It’s easy to find out how much storage a site uses through the SharePoint admin center or with PowerShell, but that doesn’t tell us what’s in a site. I wanted to know what documents were in what folders, the size of the documents, and their authors.

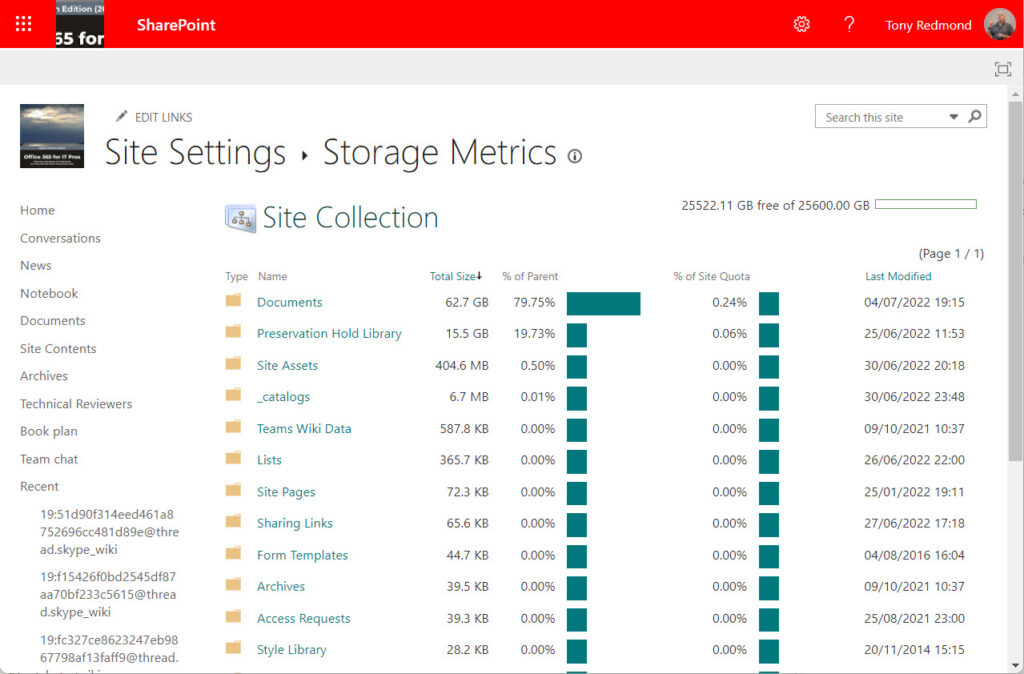

Searches for a feature to do the job found nothing. Microsoft 365 includes SharePoint activity reports and file and folder sharing reports and usage reports. Site contents will tell me the total number of files in the document library (and even more in the preservation hold library), and the storage metrics for the site informs me about storage consumption (Figure 1). But nowhere do I find a simple listing of documents.

Processing SharePoint Site Files with PowerShell

Traditionally, PowerShell modules have handled the automation of administrative activities, like generating a list of SharePoint Online sites or Microsoft 365 Groups. The SharePoint Online PowerShell module is firmly in this tradition and doesn’t offer any ways to navigate within a site and report what’s found there. The PnP PowerShell module includes cmdlets to open folders and list files found there, and I’m sure that you could create a document report with its cmdlets.

I chose to use Microsoft Graph API requests instead on the basis that I knew the Graph better than PnP. More importantly, I had some code that I could reuse (rule number 1 of any PowerShell project is to find some code to start off). In this case, I had a script to decrypt protected SharePoint files by removing sensitivity labels from the files.

Steps to Create a SharePoint Site Files Report

Conceptually, the steps to create a report listing the files found in a SharePoint Online site are straightforward:

- Connect to the Microsoft Graph with the correct permissions. It’s always a good idea to run the Disconnect-MgGraph cmdlet beforehand to remove any previous session.

- Identify the target site.

- Find the document library (for the purpose of this article, we assume the target site has a single document library; this is usually the case for most sites today, especially those connected to Teams).

- Find the folders in the document library.

- Report the documents stored in the folders and any sub-folders in those folders.

Although I use Graph API requests in the script to retrieve information about folders and files, I decided to use the Invoke-MgGraphRequest cmdlet from the Microsoft Graph PowerShell SDK to run the requests instead of a cmdlet like Invoke-RESTRequest. Using the SDK means that I didn’t have to create a registered app in Azure AD to hold the necessary Sites.Read.All permission needed to read site data. This is an acceptable method to use on a one-off basis. In production, it would be better to use a registered app and a certificate for authentication. The Microsoft Graph PowerShell SDK fully supports this approach, which has these advantages:

- It avoids the problem with accumulation of permissions on the service principal used by the Microsoft Graph PowerShell SDK.

- It allows the use of application permissions rather than delegated permissions. In practical terms, this means that an administrator can run a report for any site. Interactive use of the Microsoft Graph PowerShell SDK uses delegated permissions, which means that the person running the script can only report sites they are a member of.

With those thoughts in mind, let’s consider some points of interest in the implementation.

Drives, Items, and Folders

The Microsoft Graph deals with SharePoint (and OneDrive) document libraries like computer drives (here’s an article about reporting OneDrive for Business shared files). After identifying the target site, the next step is to fetch whatever drives exist within the site. The third line looks for a drive named Documents. This is the English language version of the default document library. You’ll probably have to change this to make the script work against sites configured in other languages.

$Uri = "https://graph.microsoft.com/v1.0/sites/$($Site.Id)/drives"

[array]$Drives = Invoke-MgGraphRequest -Uri $Uri -Method Get

$DocumentLibrary = $Drives.Value | ? {$_.name -eq "Documents"}

Next, we get the items in the document library. These can be individual files and they can also be folders. Here, I fetch the contents and then filter them into folders and files.

Next, we get the items in the document library. These can be individual files and they can also be folders. Here, I fetch the contents and then filter them into folders and files.

$Uri = "https://graph.microsoft.com/v1.0/sites/$($Site.Id)/drives/$($DocumentLibrary.Id)/root/children"

[array]$Items = Invoke-MgGraphRequest -Uri $Uri -Method Get

# Find sub-folders that we need to check for files

$Folders = $Items.Value | ? {$_.Folder.ChildCount -gt 0 }

# And any files in the folder

$Files = $Items.Value | ? {$_.Folder.ChildCount -eq $Null}

One important point is that the code doesn’t include anything to handle pagination. Graph API requests limit the number of items they return to avoid problems which might happen if they returned very large amounts of data. In this instance, the Invoke-MgGraphRequest cmdlet returns up to 200 items. This should be enough for the root of a document library, but it might not be. If this is the case, you’ll need to check if the information returned by the request includes a nextlink (a URI to the next page of available data). If a nextlink exists, the script needs to run another Graph request to follow the link to retrieve the waiting data. You can see how to do this in the GetFilesFromFolder function in the script.

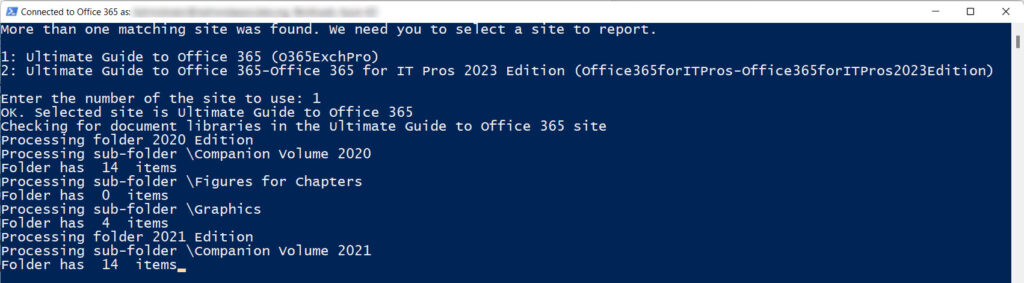

After finding the files and folders, the script reports what it discovers (Figure 2) and creates a report. Processing is rapid because the script only reads information. Generating a report for a site holding 1,308 documents took about five seconds. Another site with 7,512 documents took 15.

Output

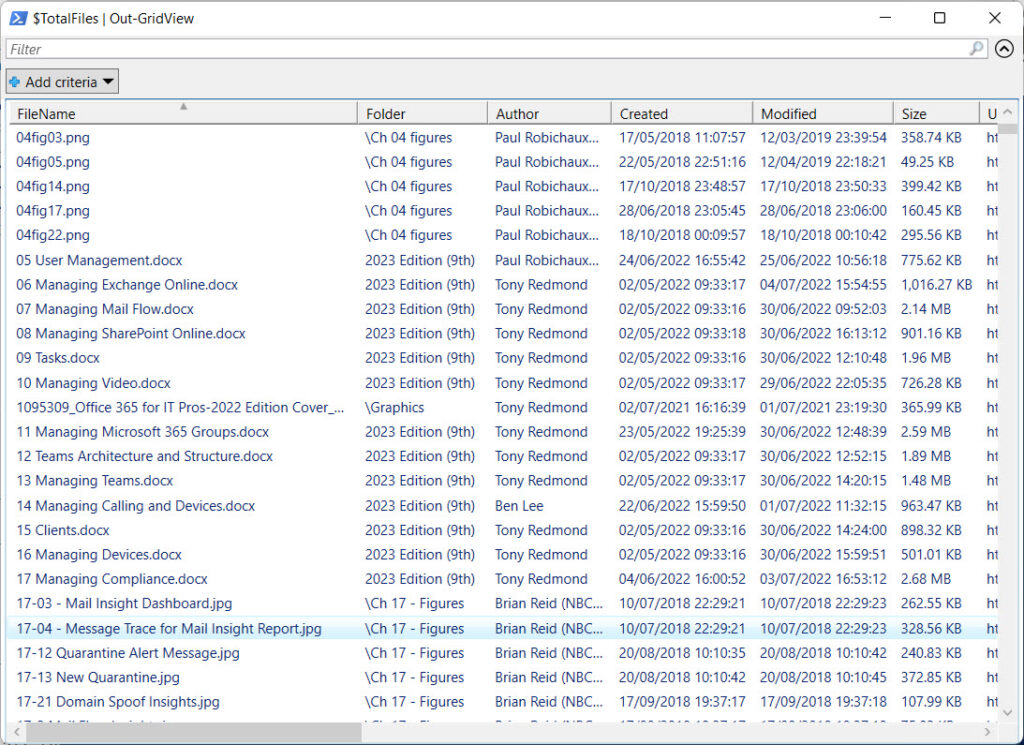

The output is in a PowerShell list, so it’s easy to generate whatever output you prefer. I often use the Out-GridView cmdlet to review the output of a script (Figure 3), but you could also output a CSV file, an Excel spreadsheet (using the excellent ImportExcel PowerShell module), or generate a HTML file. For the latter, you could try the PSWriteHTML module (see its documentation for examples).

Not a Complete Solution

It’s important to emphasize that the script (downloadable from GitHub) is not a complete solution. Instead, it’s a proof of concept to demonstrate how to interact with SharePoint document libraries using Graph API requests. Lots more could be done to improve error handling, handle pagination for the root folder, handle multiple layers of folders, deal with sites that have multiple document libraries, and so on. I’ve tested the code against multiple sites, and it appears to work well. At least, it does in my tenant. I’m interested in what others think and the improvements you make. The script is, after all, just PowerShell code, so anyone can change (improve) it.

Tony, hello. Could this approach be used to capture File Statistics (# of views in a time frame, for each file in a targeted DL)? (We can see those stats when a specific file is selected, then details in the file ‘fly-out’, then views in that fly-out details pane for the specific file (last 4, 30, 60 days time frames..)

I think this is possible using the https://learn.microsoft.com/en-us/graph/api/itemactivitystat-getactivitybyinterval?view=graph-rest-1.0&tabs=http ItemActivityStat API.

Hi Tony,

Thank you for all of the great tutorials and scripts, they provide a great starting point and are very helpful when trying to understand how to approach a task using PowerShell.

That said, I’ve encountered an issue in “While ($NextLink) Loop” in the “ReportFilesSPOSite.PS1” script.

I’ve found 3 instances in our tenant where will continuously loop without exiting.

In all cases the $Nextlink variable doesn’t change, and the output is the same for 2 cycles, then it returns and starts the looping over the same folder again.

I’ve found that I’m able to get around the issue by removing “-IsNextLink $true” from:

UnpackFilesRecursively -Items $MoreData -SiteUri $SiteUri -FolderPath $FolderPath -SiteFiles $SiteFiles -IsNextLink $true

Can you explain why -IsNextLink $true needed here?

Any ideas on what could be happening?

Thank you!

I have no idea . I just looked at the script for the first time in about 18 months and made some slight updates to take account of the current SDK version and make Visual Studio happy with the code (it doesn’t like shortcuts like ? for Where-Object). The code in the UnpackFilesRecursively function looks OK and worked well when I tried it with a couple of document libraries. But hey, it’s PowerShell, so feel free to investigate and fix any bugs you find!

Hi Tony,

Thank you for the script. I think it’s the only one available in public domain with Graph API approach.

I am experiencing the same loop problem described above and removing the -IsNextLink $true is not fixing it either. It’s happening with folders with more than 100 items specifically and it takes several cycles to exit the loop. For folder with more than few thousand items, the loop goes on forever. If you can point me in right direction will be greatful

The problem might be a bug in the underlying API. The nextlink is simply a URL pointing to the next page of data that a Graph query finds, so it’s the responsibility of the underlying workload to provide that data by responding to the request in the nextlink URL. I haven’t seen a problem with the nextlink in my tests but I am not running code against your sites and document libraries. If you have a problem, you should report it to Microsoft and have their engineers look at code running in your tenant. I can only guess at what might be happening and I prefer not to guess.

Could this be used to determine files with and without a Retention label applied? Would be useful for tracking rollout of labels.

https://practical365.com/report-sensitivity-labels-sharepoint-online/

I’m trying to call the creation date of the sharepoint sites in the api graph report, but I can’t get it to bring me data, I don’t know if I’m correctly calling the field $Site.” createdDateTime”

$Site.CreatedDateTime

Friday 29 May 2015 10:18:05

I use Excel and Power Query to extract file metadata from an entire site.

It’s then straightforward to present the data in Sliceable report tables for the entire team to use in determining file disposition.

Thanks for posting your scripts – they’re good learning tools.

Sounds like a good idea. Maybe you’d write up the approach in an article for us?